February 4, 2022

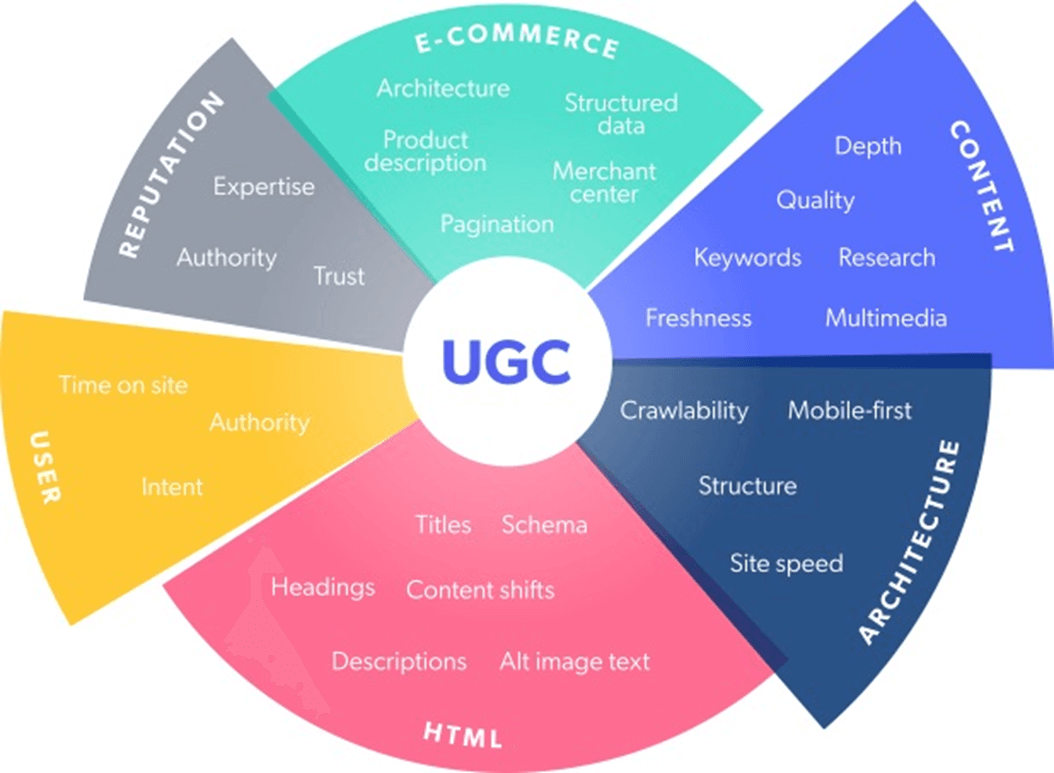

When you think of SEO and ways to increase organic traffic, your mind likely jumps straight to keyword research and tools like SEMrush. But there’s an easier, more effective method. We’re going to teach you why user-generated content (UGC), like customer ratings and reviews, images, and videos, is all you need to boost your SEO strategy.

If you prefer, you can watch our on-demand masterclass on the subject instead.

Chapters:

- How UGC increases organic traffic

- Brands and retailers utilizing UGC for SEO

- Assemble your UGC toolkit

- Calculate the SEO impact of your UGC

You’ve done everything right. Keyword researched your industry and target audience, optimized your e-commerce site pages, your site is user-friendly, and you’ve earned backlinks. Nice. But, even though you’ve taken these steps to improve your search engine optimization (SEO) performance, your organic traffic is still not where it needs to be.

Enter: user-generated content (UGC). UGC is a lot more than Instagram posts of fab new outfits or hilarious product reviews. While those have excellent social and entertainment value, from a marketing standpoint, UGC also has significant e-commerce benefits. A big one that often flies under the radar is that UGC is powerful fuel for search engines.

Search engines are gateways to purchases. “As shoppers, we often turn to search engines to find the products we’re looking for,” says Brandon Klein, a senior product marketing manager at Bazaarvoice. Social media is a wonderland of product discovery, which is why Instagram SEO is so important. And Google is the place for research, comparison, and consideration. In other words, shoppers are inspired on social and ready to take action on search.

Organic search is the dominant source of global e-commerce traffic. So it’s crucial to make sure your site content is optimized to rank high in search results and is compelling enough to click. And UGC can make your site more appealing to both search engines and human shoppers alike.

1) How UGC increases organic traffic

Since the dawn of the internet, search engines have refined their algorithms to produce the best results for user queries. These search engines use a variety of criteria to determine which website results best match users’ intent. Among the most important factors that provide value are keyword match and quality content.

And the more specific the content on your website is, the more chances you have to match with a search query. We don’t just search for “bluetooth headphones” we search for “best bluetooth headphones for running” or “most comfortable bluetooth headphones for sleeping.”

Klein says, “This long-tail nature of how people use search engines to find the perfect product is a table stakes opportunity for brands and retailers to leverage consumer language to show up in a moment that counts — when shoppers are trying to make a purchase.” So how can brands figure out exactly what consumer language to include on their website? By sourcing it from other consumers via product reviews, Q&As, and social media posts.

Aside from containing long-tail keywords, UGC also improves your SEO in subtler ways.

Ratings and reviews

According to a survey of 500 brands and retailers, improved SEO is one of the top business benefits of ratings and reviews. That’s why 63% of them rely on ratings and review programs to meet their goals for SEO — among several other targets. It’s simple, brands with a continuous stream of review content will see their product rank higher in search queries. And vendors with more reviews will see their products rank higher on retailer’s websites.

More reviews = higher ranking = increased conversion = boosted sales

Product reviews and star ratings give customers a chance to provide feedback that’s not only helpful to your brand but also highly influential to other shoppers. When customers interact with reviews, the likelihood of a conversion doubles because of their trust in the perceptions and opinions of fellow shoppers.

Reviews provide an effective and consistent influx of fresh content, a major component of Google’s ranking criteria. In addition to the fresh factor, customers are contributing long-tail keywords from their own feedback. These long-tail keywords have a lot of value. When someone else searches for the same terms found in product reviews, the product pages linked with them will appear in search results. The CTR of long-tail keywords is 3-6% higher than broad searches, and they’re also less competitive to rank for than shorter keywords.

Another perk of ratings and reviews is the coveted rich snippet. A review rich snippet displays an average Google seller rating and the number of reviews associated with the corresponding product. It also takes up precious SERP (search engine results page) real estate, making it a focal point for the user.

A large-scale Milestone study of over 4.5 million search queries found that the click-through rate (CTR) of organic search results with rich snippets is 58%, compared to 41% for non-rich results.

As you can see in the example below, this review rich snippet for a Wayfair product category page includes an aggregate of all the star ratings and thumbnail images of the products on that page.

Klein says if the quality of the page merits those clicks from the rich snippet, the payoff will be even greater. “If this initial click-through is supported by other positive site metrics on the web page that the user lands on, like time on site, additional clicks, etc., then Google is inclined to reward that site with a higher ranking as a result of providing consumers with a high quality experience.”

To enable rich snippets for your reviews, add review markup code to the product detail pages (PDPs) where you want to display star ratings in search results. Confirm you’ve done this correctly with Google’s Structured Data Testing Tool. Once you’ve established there are no mistakes in your code, resubmit your XML sitemap in Google Webmaster Tools so that Google indexes your changes as soon as possible.

Questions and answers

Offering a question and answer feature on your e-commerce site invites customers to interact with you, similar to an in-person shopping experience. This is a way for shoppers to submit questions right on the product pages they are looking at. You get an alert whenever there’s a question, and then you can respond directly. The questions and answers serve as records that other shoppers can look at when considering products.

Questions and answers result in a 98% average conversion lift for brands and retailers. And, just like reviews, questions and answers produce keyword-rich content that ranks for matching queries, thus increasing organic traffic.

And the SEO fun doesn’t stop there. Questions and answers create the ultimate resource for FAQ pages. With FAQs, you can compile all the most common and important questions from customers with your brand’s answers and solutions all in one place. Not only is this a handy customer service tool, but it’s also more content for Google to rank in search results.

FAQ rich snippets also happen to have the highest CTR (87%) of any type of search result, according to the Milestone study. This indicates FAQ pages are among the most engaging and helpful to shoppers.

Let’s look at an example from McDonald’s, which has a FAQ page that addresses customer questions while driving organic traffic. The golden arches do more than dominate the fast-food industry; it also knows a thing or two about SEO strategy. The design of McDonald’s FAQ page is clearly laid out and comprehensive, with a large search bar at the top for easy navigation. Their expansive list of customer questions with the company’s corresponding answers is 177 and counting.

Their FAQ page is structured by individual sections for each question so that all the subheadings contain long-tail keywords. So, it’s no wonder that the single FAQ page ranks for 300 keywords, according to ahrefs.com. This is a great example of how you can repurpose customer questions into one main resource that ranks for all the keywords found within them.

Above is some examples of the questions on the FAQ page that match common search queries. This data from ahrefs.com shows the keyword from the FAQ question, the search volume in the U.S. (Volume), and the global search volume (GV).

Visual UGC

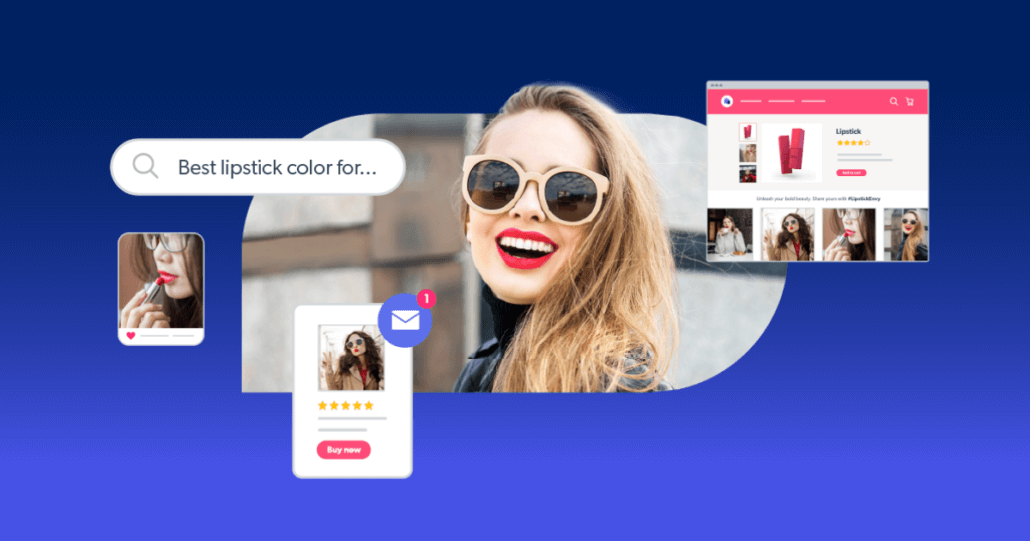

Text isn’t the only kind of content you need to optimize to increase organic traffic. Visual content — such as photos and videos of consumers using your products — plays a big role in SEO strategy as well. Google returns images and videos for approximately 25% of search queries.

That’s why it’s important to showcase visual UGC on your PDPs, so you have a variety of rich content for search engines to pull from. Then, once your site visitors are on the page, visual UGC can really work its magic. Shoppers respond to images and videos of other customers using a product. Consumers know that professional photography can be deceptive, but visual UGC shows them how your products look in the real world.

These factors contribute to website visitors spending up to 90% more time on pages with visual UGC galleries. And when users spend more time on pages, it signals high value and quality to Google, thus boosting their SEO ranking.

You can also share the captions from social media photos and videos along with the images. Oftentimes, these captions are fun, playful, honest, and transparent. People love to share their lives — including their purchases — on social media, so it’s great content to share on your e-commerce site. Part of the visual UGC you feature on PDPs can feed in from users’ social media accounts with their permission. The comments on their photos and videos are yet another opportunity to rank for long-tail keywords.

Take this example from Old Navy’s shoppable UGC gallery. Based on the customer’s caption, this photo could possibly appear in search results for terms like “cozy tunic sweatshirt” or “sweatshirt for leggings.”

Repurpose UGC to extend its impact even further

There’s a bonus benefit to implementing these types of UGC for SEO: you can apply their insights and verbiage to further optimize product page messaging.

Reviews, questions and answers, and visual UGC can illuminate key points, qualities, and concerns that you can then use. For example, if multiple shoppers ask if a kitchen appliance is dishwasher safe, that’s a feature to highlight in the product description.

Furthermore, these informal, efficient, and convenient insights are invaluable for improving or even developing products to better meet consumers’ needs. You can use them to highlight features that customers care about and describe particular use cases for products that you wouldn’t have anticipated before gaining that knowledge from UGC.

The toy brand KidKraft put this strategy into action when they discovered different product functions revealed in customer reviews that they hadn’t considered before. Thanks to customer feedback, they refreshed their marketing messaging and developed different versions of products, resulting in greater sales.

Another way to recycle the UGC found throughout your site is to feature particularly positive or helpful pull quotes from reviews on product, category, and home pages. You can do the same thing for relevant questions and answers that warrant space in a higher, more prominent section of your product pages.

2) Brands and retailers unleashing the SEO power of UGC

Even though UGC isn’t often at the top of the list of ways to increase organic traffic, there’s plenty of clever brands and retailers that are hip to its impact.

LeTAO boosts overall SEO performance with customer reviews

The Japanese pastry and bread brand LeTAO focuses on reviews to drive traffic to their e-commerce site. LeTAO built up over 45,000 reviews and used technology to ensure search engines could find and index every single one. This steady stream of fresh content improved their search ranking, and the high volume of reviews earned them significant organic traffic.

After a year of tracking performance, LeTAO determined that their reviews program brought in 27,000 visitors to PDPs optimized with reviews and that most of those shoppers were new users. Visitors to those pages viewed an average of 10.5 pages per session and spent an average of six minutes longer than usual. The conversion rates associated with those organic visits were 5.9% compared to 3.1% from other referral sources.

Oak Furnitureland directs traffic from social and bolsters PDP performance with visual UGC

Social media is an important source of referral traffic, and the channel is literally made for UGC. It’s where people go to leisurely browse brands and products, which inspire them to shop. In fact, almost 80% of people say UGC from social media highly influences their buying decisions.

Furniture retailer Oak Furnitureland recognized the opportunity to create a shopping experience for their social audience and invited their followers to their e-commerce site. Using the Like2buy feature, they enabled shoppable Instagram posts. When users click on posts with featured products, they’re sent to PDPs to learn more and to “add to cart.”

Over the past year, this feature resulted in 11,000 website visits and a 79% click-through rate. Oak Furnitureland keeps the social experience going once visitors land on their product pages with UGC galleries. These galleries display images their customers upload or post on social media with the #OakFurnitureLand hashtag. Galleries increase time on site by 281% for visitors who engage with galleries, and they result in a 248% increase in conversions and an average order increase of 21%.

Since website traffic is a Google ranking factor, extra referral traffic from social media can also help your site rank in search results. Google also takes into account a website’s social signals when ranking content. That means your brand’s social presence and community engagement should be a priority to increase organic traffic.

Makeup.com by L’Oreal expands UGC into blog posts

Makeup.com is an outstanding example of a major beauty brand’s blog. Completely separate from the main L’Oreal brand site, Makeup.com stands on its own as an editorial source for beauty tips, trends, recommendations, and more.

The blog uses UGC as inspiration for article topics and integrates it into the content. For example, the blog used a common beauty question as the focus of their article, “Beauty Q&A: Should I Avoid False Eyelashes If I Wear Contact Lenses?” Makeup.com recruited a board-certified ophthalmologist to lend their expertise in answering the FAQ.

In another post, “The Best Type of Bangs for Your Face Shape,” they sourced UGC from social media to showcase different hairstyles on everyday people instead of using branded photos. Blogs have a significant impact on SEO. Long-form content, like articles, keeps your website content fresh, and when optimized properly, it can rank for target keywords that will drive traffic for relevant topics.

Allswell leverages customer reviews to generate traffic from emails

Like social media, email campaigns produce a substantial amount of referral traffic for e-commerce brands. While email marketing isn’t directly related to SEO, it can support your SEO strategy and influence your site ranking. As Chris Rodgers, CEO and founder of Colorado SEO Pros, told PC Mag, “The big connection between SEO and email is the ability to promote targeted SEO content, improve off-page SEO factors, and drive qualified traffic that leads to better engagement signals for Google and other search engines.”

UGC is an effective and versatile tool for email campaigns. Featuring UGC in emails can be done in a variety of ways to achieve different goals. Allswell, the home bedding and bath brand, appeals to consumers’ trust and reliance on reviews to inspire email clicks leading to product pages.

Another approach is to highlight UGC galleries in emails with hashtags to encourage subscribers to create their own images and videos with your products. UGC can also help to personalize emails with reviews, Q&As, and visual content relevant to your subscribers’ interests and purchase history. Businesses that include UGC in their email campaigns have seen an average increase of 13% for email click-through rates and an average increase of 35% for email conversion rates.

Walmart Canada’s Spark Reviewer

It’s not just brands that can increase organic traffic. Retailers need to think about UGC for SEO too. Vendors who continuously collect fresh review content can have a higher chance of their products being discovered on retailer sites. And the more review content vendors have, the more product-specific keywords retailers have on their website to drive traffic from search.

No one knows this better than Walmart Canada. For vendors to increase sales on WalmartCanada.ca, products need to show up in a shopper’s search results. So, product pages need to be optimized for SEO. Walmart uses algorithms to rank products based on the number of sales and views, and how pages display the product name, images, features, description, and attributes.

“The products that stand out on our site have strong review volume numbers and high average ratings. Getting more reviews is one way brands can improve where their products show up, giving them more clicks to their products”

Shariq Hasan, Associate Manager, E-commerce Merchandising Operations at Walmart Canada

How does Walmart collect all that great UGC? The retail giant partnered with Bazaarvoice to create the Spark Reviewer sampling program, enabling vendors to exchange product samples for honest reviews on Walmart.com. To date, the program has seen over 380,000 reviews collected, leading to higher product visibility and increased conversion.

Encouraging customer reviews, and other UGC, keeps your product pages fresh with new content. And the retailer websites with regularly updated keyword-rich content rank higher in search. Sounds like a win all round.

3) Assemble your UGC toolkit to increase organic traffic

In order to leverage UGC to improve your e-commerce SEO, you need to have a steady supply. Luckily, there’s many different ways to acquire UGC. One of the most effective is to launch a product sampling campaign. In exchange for free samples, you can ask recipients to leave a review, post visual UGC on their social media accounts that include your brand’s handles and hashtags, or both.

And to take your review volume to the next level, ReviewSource is an always-on content solution that taps into the active Influenster community of over 6.5 million members who contribute reviews for brands. It collects fresh review content for you, while you sit back and reap the rewards.

You can also use your email campaigns to ask your subscriber list to upload visual UGC to your site or leave a review in exchange for a discount code or another incentive. Alert shoppers on social media and email that you have a question and answer feature if they have any questions about your products or services.

4) Calculate the SEO impact of your UGC

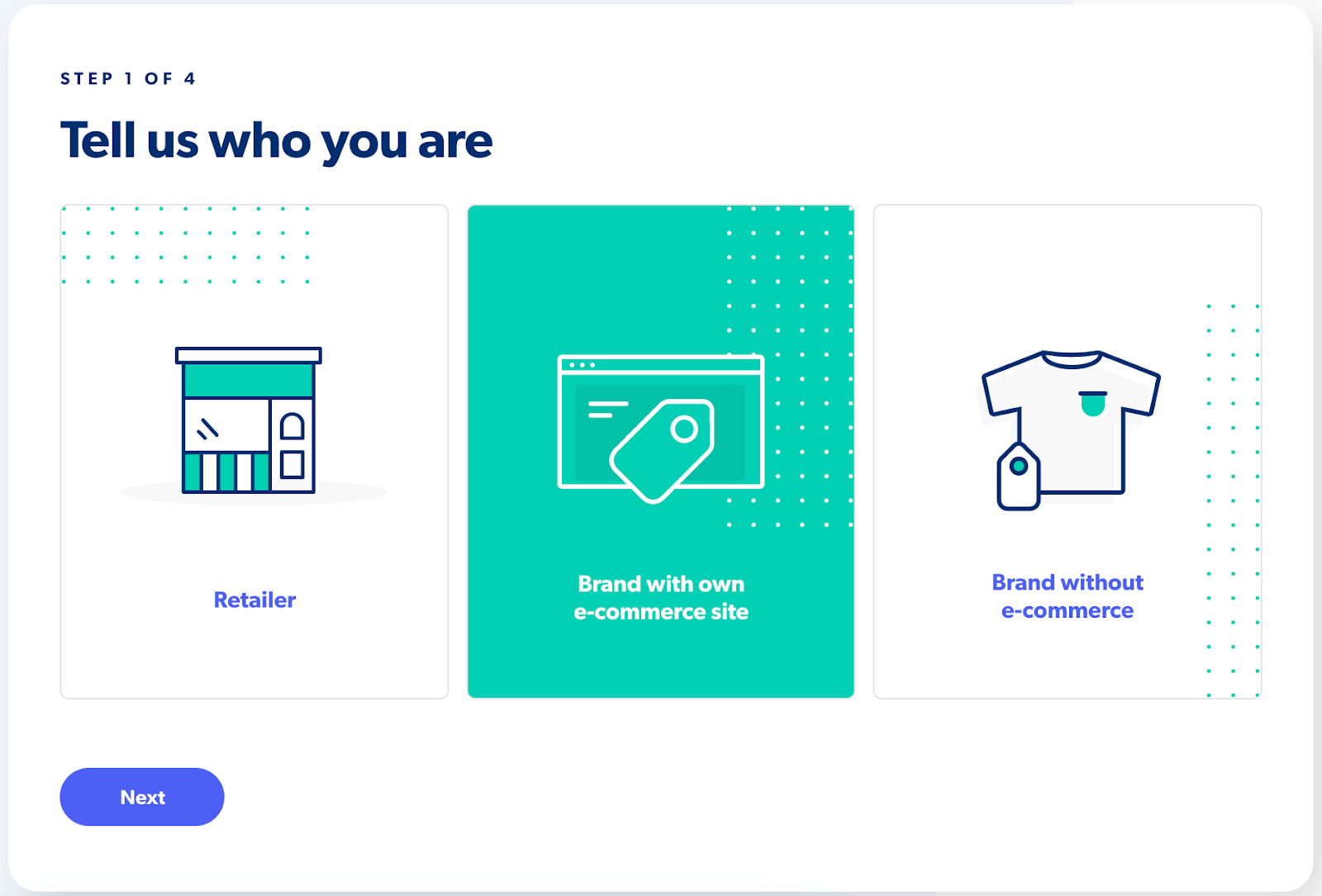

We can sing the praises of using UGC to increase organic traffic all we want. But really, like all marketing strategies, hard numbers do more talking than words. (Somewhat ironically.) That’s why we created a marketing ROI calculator tool, pictured below.

The free tool takes 12 months of benchmarking data to estimate the SEO impact, increased revenue, conversion rate, and in-store sales you can expect. The quantifiable evidence you need to begin your UGC for SEO strategy. Try it here.

You can check out the rest of our Long Read content here for more marketing strategies, tips, and insights.