April 9, 2026

It is a strange new etiquette: we let AI curate our options,

but we don’t trust its taste.

We ask the digital oracle for a serum that ‘improves skin’ and get a recommendation promising ‘eternal radiance.’ Confident. Fluent. Completely unverifiable.

So why, after all that, do we still scroll to the reviews?

AI has fundamentally changed the buying journey, not what closes the sale. Our research into 6,900+ shoppers confirmed it: AI has become the starting point for discovery. But the reason to believe? That specific, human, all-too-real account of what it’s actually like to own this thing? That still lives in the review section.

It matters even more now.

AI is the gatekeeper. Reviews build consumer trust.

Think of AI as the ultimate personal assistant: brilliant at narrowing down 500 pairs of wireless headphones to the top three based on your needs. But once the AI makes its recommendation, the shopper doesn’t just hit ‘buy.’

They treat AI product reviews and AI-generated summaries as a hypothesis, one that requires human proof. They head straight to the ratings and reviews to see if the ‘noise-canceling’ feature actually survives a crying baby on a six-hour flight.

Our data shows 43% of consumers already use AI to help them shop, but they treat that output as a starting point, not a verdict.

Your AI strategy and your review strategy are the same workstream. Every review on your product detail page (PDP) either confirms the AI’s recommendation or overrules it.

What skepticism brings: Mind the trust gap

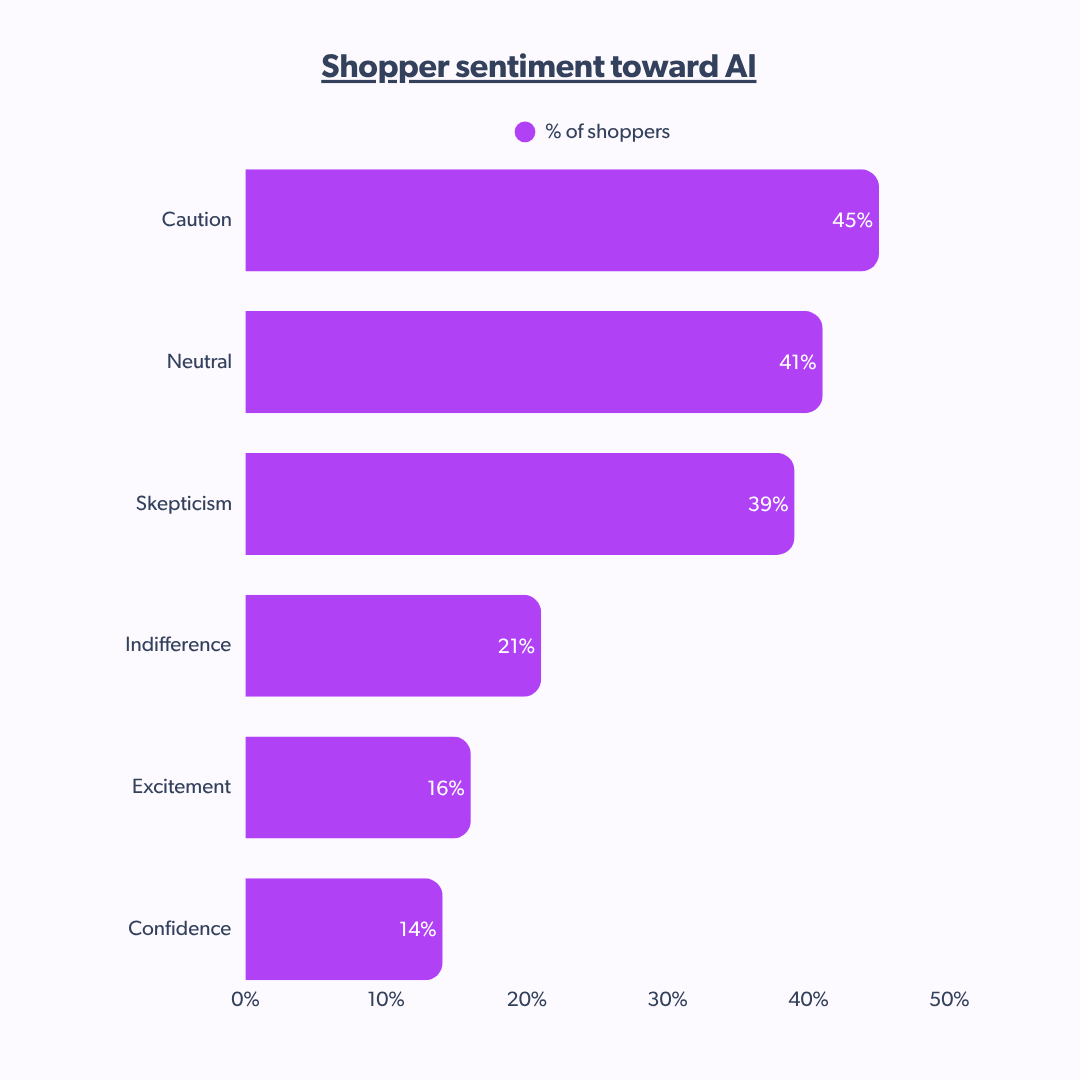

We’re living in a period of reserved sentiment. Before we discuss what good looks like, let’s acknowledge where consumer trust in AI actually sits.

In our research of 6,900+ shoppers, most recently we asked 1,300+ US shoppers:

what emotion comes to mind when you think about AI’s role in your shopping experience?

The dominant responses were not excitement.

This means when shoppers know exactly what information an AI tool is using, their stance shifts from cautious to confidence.

→ Less anti-technology; more pro-transparency.

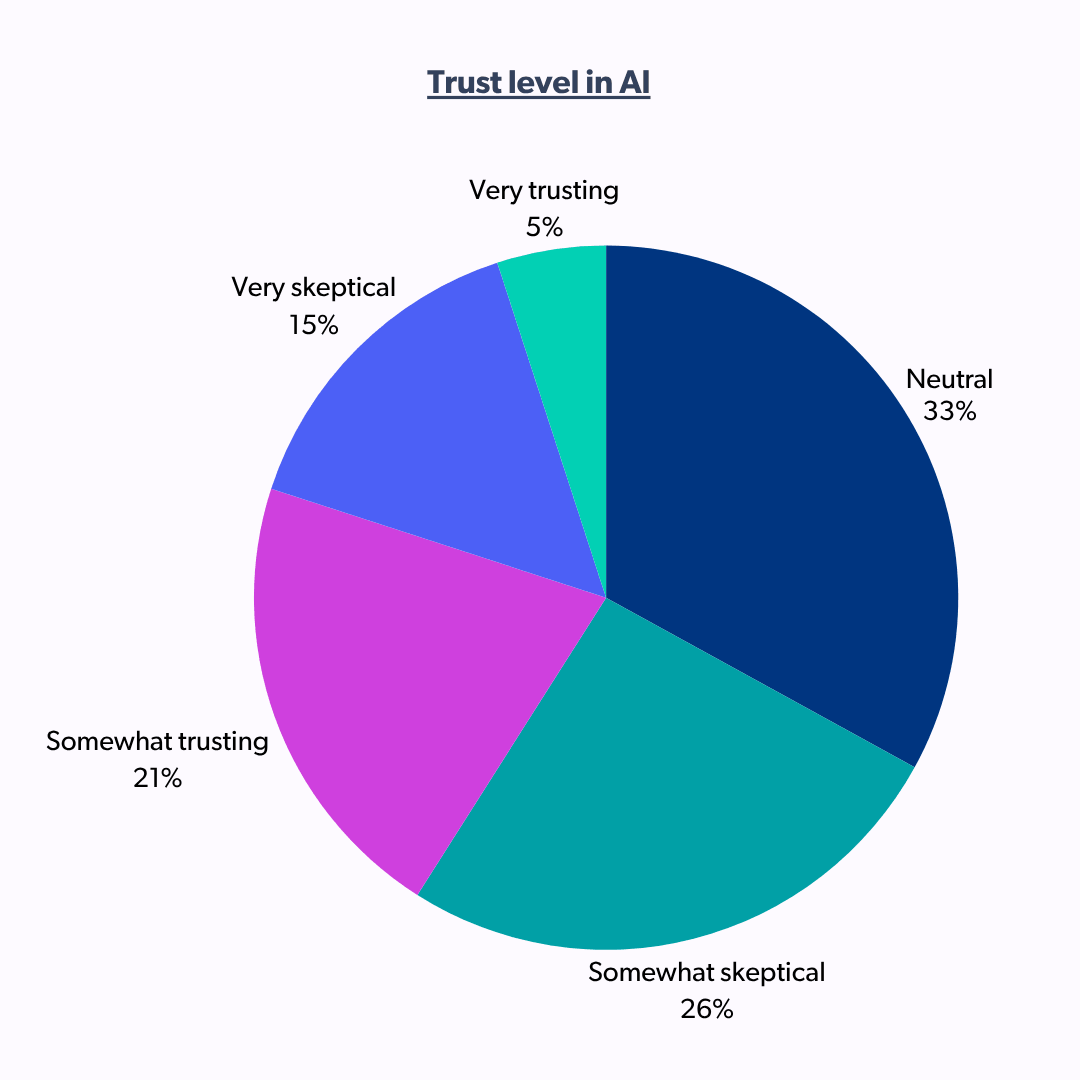

On trust specifically:

Shoppers are not rejecting AI, they are withholding judgment. They’re waiting for brands to lead with education rather than automation. The key? Confidence grows when brands are transparent about AI usage.

For example, the summary AI reviews feature works best when they clearly credit human sources. That transparency turns neutrality into trust.

Why AI product reviews still need a human heart

Shoppers have developed a keen nose for robotic content, a.k.a the ‘AI ick’. And the data backs this up: 64% of shoppers said they do not consider a review written entirely by AI to be authentic.

As AI product reviews become more common, the value of a real, unmanipulated human voice appreciates.

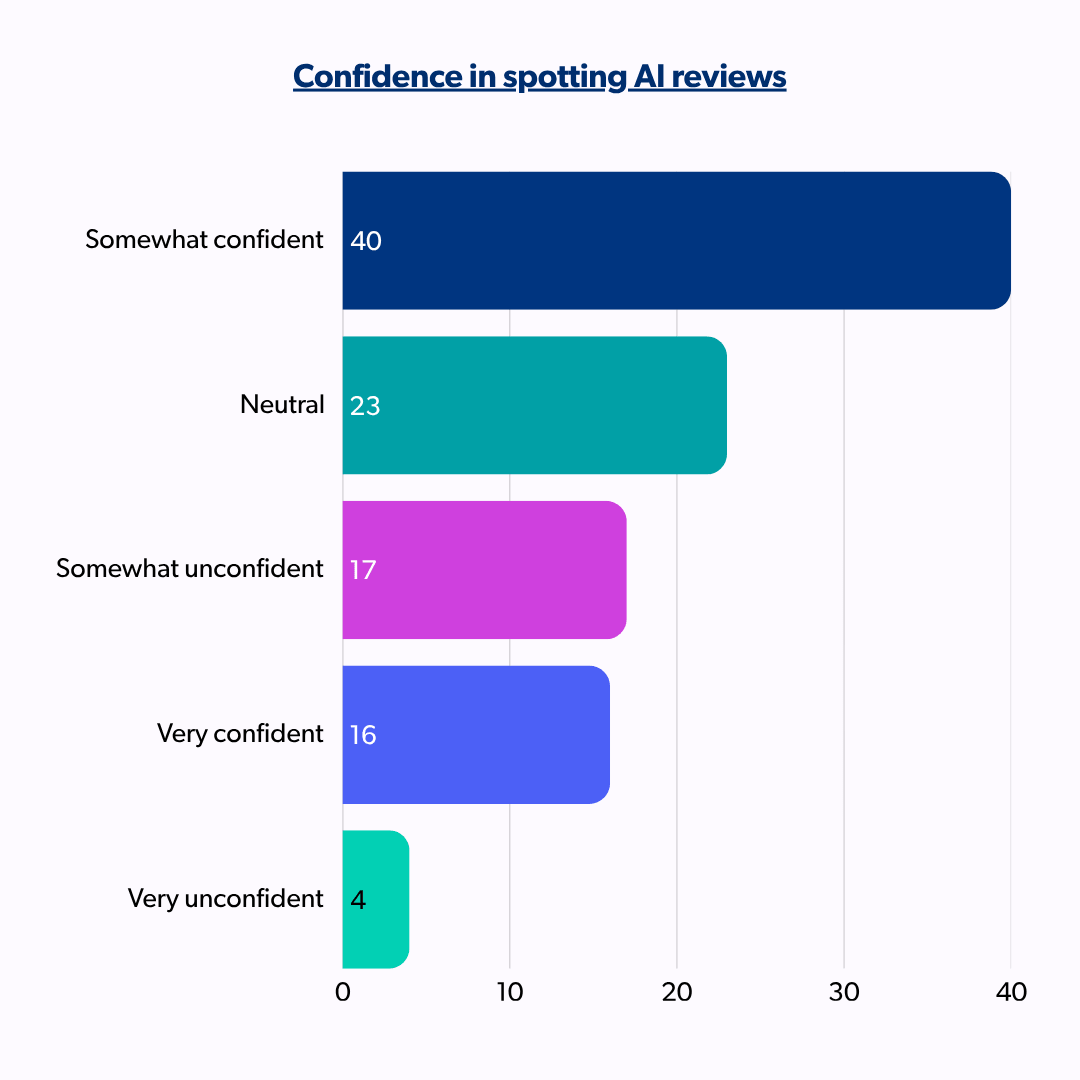

Shoppers are developing the skills to enforce this standard.

56% can spot an AI-written review. They’re the active fact-checkers that are running that sniff test on your PDP right now.

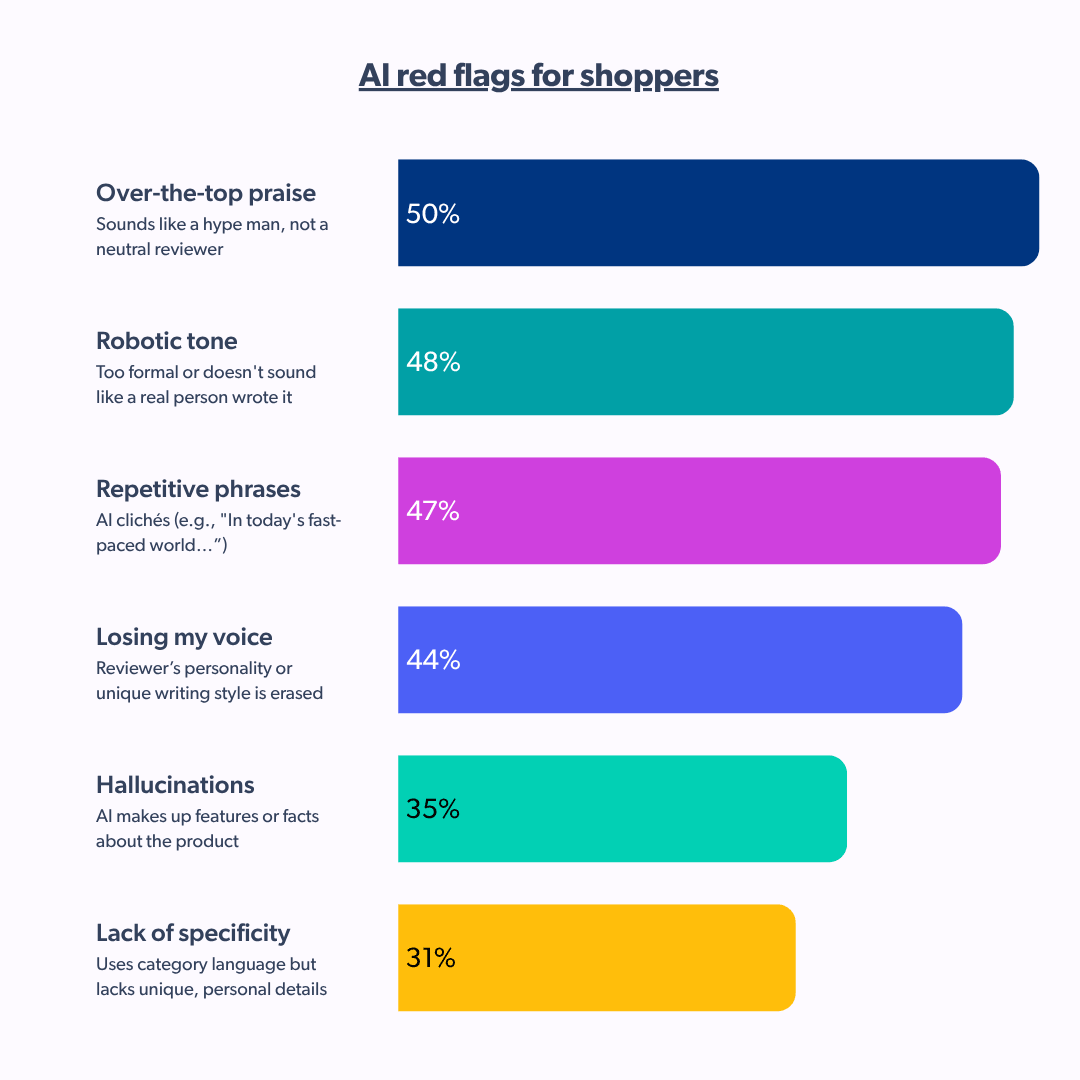

Silent killers of consumer trust

What triggers the shopper drop-off?

These aren’t vague concerns. They’re identifiable signals shoppers use to disqualify a review the moment they appear.

AI-generated reviews don’t cause drop-offs because they’re inaccurate. They fail because they carry no risk. Reviews are a transfer of trust: If I buy this vacuum, I’m risking my money; I want to hear from someone else who risked theirs.

An AI review writer carries no such risk, and shoppers can sense that lack of skin in the game.

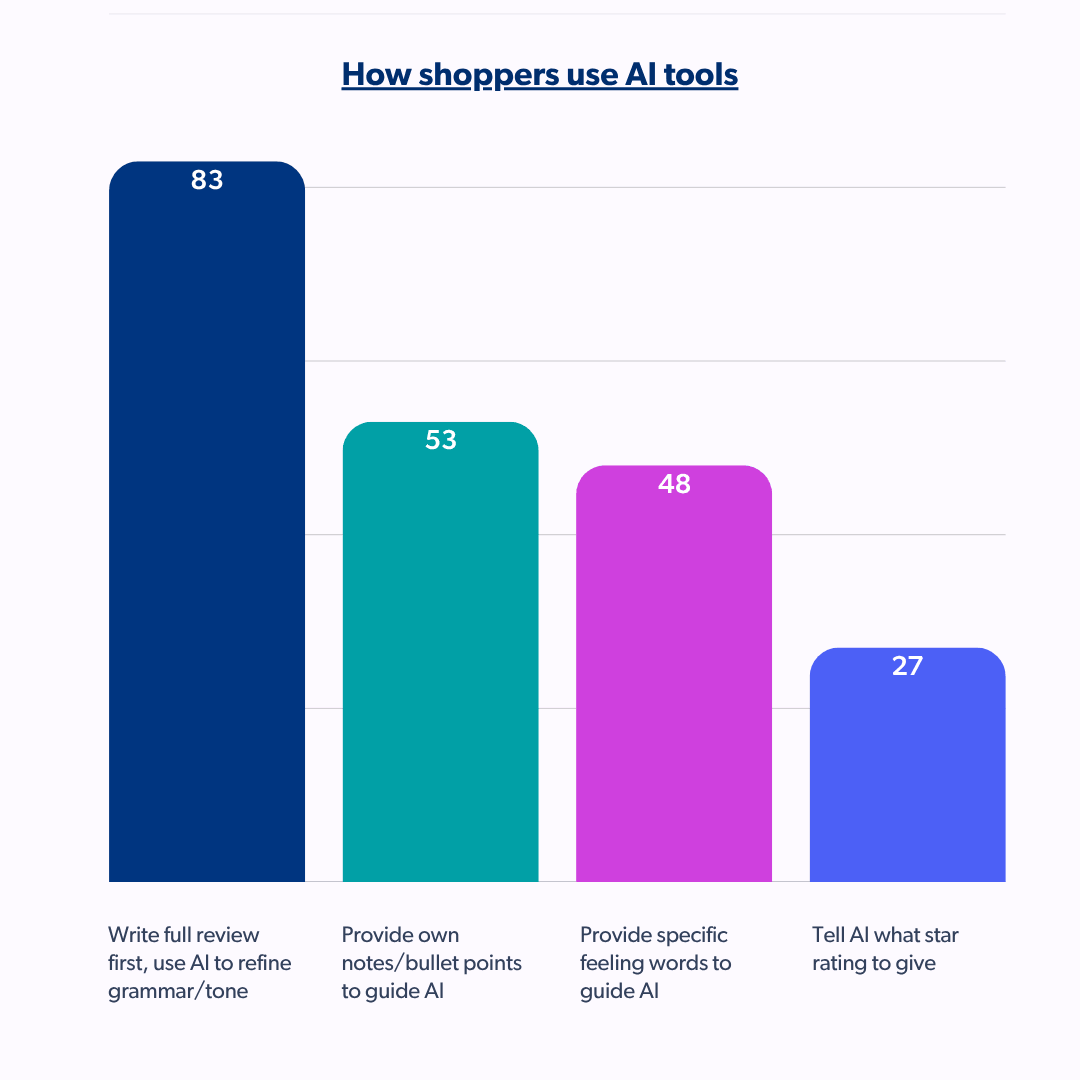

How 83% of reviewers use AI review writer tools correctly

8 out of 10 shoppers who use AI write the full review themselves first, then use AI to improve the grammar or shorten the length. That difference between using AI as a copy editor and not a ghostwriter is evident to other shoppers browsing their options.

27% of shoppers are the ones using AI to ghostwrite and those kinds of reviews fail the sniff test. The challenge for brands is building systems that scale the good behaviour and identify the rest.

The content coach approach: human-written, AI-assisted

The opportunity for brands is building a system that scales this behavior rather than replacing it.

A content coach does not write the review for the customer. It guides them to write a better one. How? By asking the right questions:

- What specifically did you notice about the fit?

- How did it compare to what you expected?

- What would you flag for other buyers?

Prompts like these unlock the specific, experience-driven detail that makes a review pass the sniff test, and that serves both the human reader and the AI recommender reading it. The market is ready: 47% of reviewers already engage with on-site suggestion tools.

A content coaching layer meets that instinct,producing reviews that are richer, more specific, and more trustworthy.

This is the investment that pays 2X.

Reviews built through a coaching model serve two audiences simultaneously: the human shopper who needs to validate the AI’s recommendation, and the AI agents themselves,which prioritizes content that is specific, verified, and grounded in real-world product experience.

One content asset, optimized for both audiences simultaneously.

| Designing a content ecosystem that scales without losing its soul is the biggest challenge of 2026. Watch our on-demand session, Defining authenticity, trust & responsibility in an AI reality, to discover the integrity guardrails that keep your brand’s voice human in a world of automated noise. |

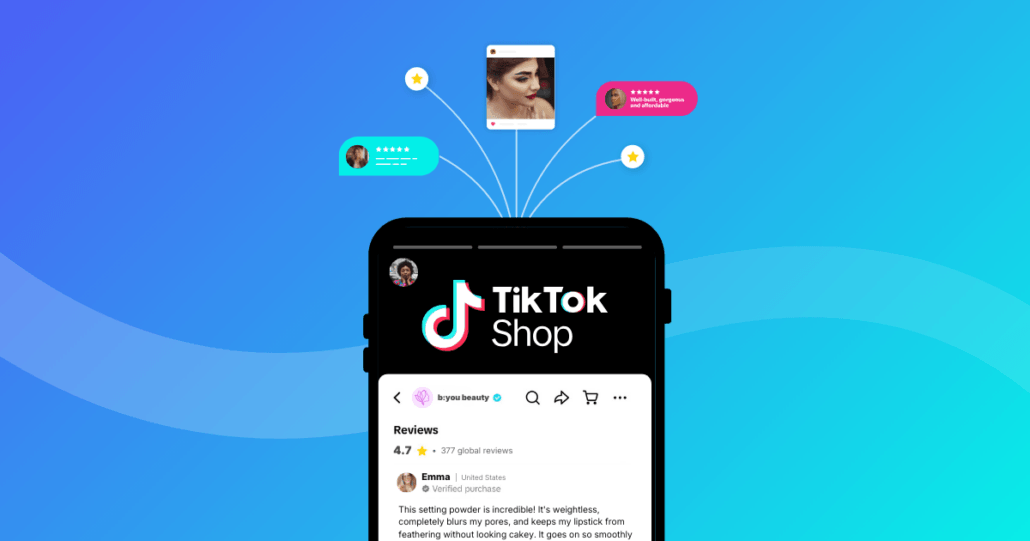

Why summary AI reviews require verified data

Coaching improves quality at the individual review level. Verification makes trust systemic.

A verified purchaser marker backed by independent, third-party moderation doesn’t claim a review is real. It proves it. And proof, it turns out, is exactly what AI is looking for too.

As Bazaarvoice CTO Nick Shiftan explains:

The combination of these agentic experiences with trusted ratings and reviews could unlock a golden age of personalized shopping experiences.

Which means AI that discusses needs, understands complex use cases, and guides consumers to exactly the right product. But that only works if the content those AI agents are drawing on can be trusted at a foundational level.

This is what good looks like: a content ecosystem where reviews are real, unmanipulated, and verified. One that eliminates hesitation, reduces skepticism, and reinforces consumer trust at exactly the moment a shopper is deciding whether to buy.

The answer was in the reviews all along

Back to that original question:

if AI sounds so authoritative, why do we still scroll to the reviews?

Because authority and truth are not the same thing. AI can read the entire internet, summarize, and reach a conclusion, but it cannot tell you whether that serum caused breakouts in week 3, or if the pump gave up after 2 months.

The knowledge lives in reviews, like it always has. It carries more weight now because the AI recommendation sitting above it has raised the stakes of what ‘trustworthy‘ actually means.

Brands that understand this aren’t treating reviews as legacy content. They’re treating them as the data layer that determines whether AI recommends them or their competitor. The human voice in your review section is not competing with AI.

It’s what makes AI recommendations trustworthy.

Want to put this into practice?

Next steps: Start with The AI ready content toolkit, a practical guide to structuring your review content for the AI-driven shopping era. And for a deeper look at why reviews remain the most powerful conversion asset you own, read Why ratings and reviews matter for your business.